Facebook

Facebook

X

X

Instagram

Instagram

TikTok

TikTok

Youtube

Youtube

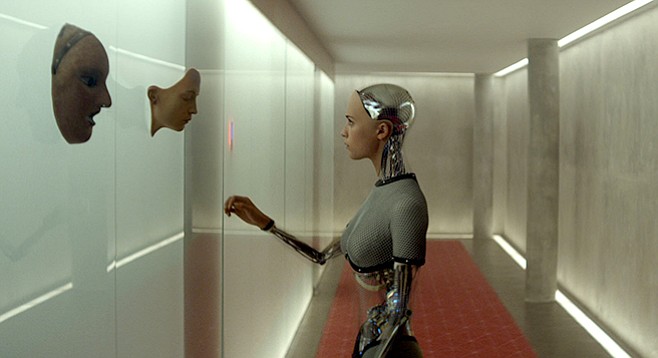

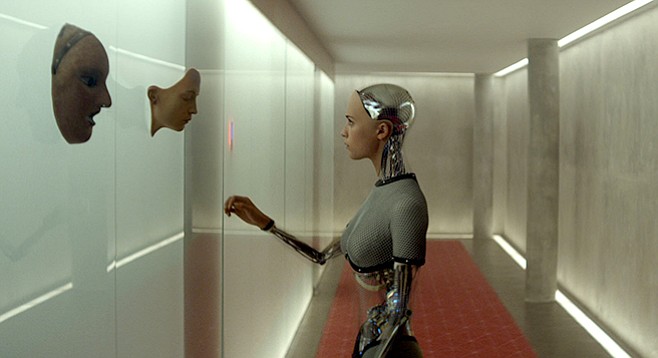

A bright young man at a fancy tech company (Domhnall Gleeson) gets picked to visit the company’s founder (Oscar Isaac) in his country home, er, homey concrete fortress. There, he is introduced to Ava (Alicia Vikander), a sweet and pretty robot who might just be the world’s first Artificial Intelligence. Our hero’s mission: talk to her and find out if she’s the real deal. Ava is a wonder: unlike <em>Her</em>'s Samantha, whose lack of a body made for a fundamental disconnect with her beloved, <em>this</em> lady AI is not only embodied but also anatomically correct, a feature which may complicate our assessor's task. But it's Isaac — buffed, bald, and bearded — is the film’s dramatic center as Nathan, a man betrayed by his own genius. He struts, he speechifies, he schemes, he scolds, all friendly menace and sly frankness. (He’s really smart, and certainly self-conscious, but is he entirely human?) Writer-director Alex Garland (writer of <em>28 Days Later</em>) makes excellent use of his spooky, locked-down setting; ups the tension with sure, slow hand; and delivers a satisfying, unsettling ending.

Artificial intelligence — the notion of a sentient machine — has had a bit of a run at the movies of late, with some entries (Her) more successful than others (Chappie). I’m putting Ex Machina on the success side of the ledger. It reminded me of Gattaca in its sleek, moody presentation and its close observation of humans struggling to navigate the murky moral waters in which they find themselves.

Matthew Lickona: Why make an AI movie?

Alex Garland: I love science fiction. If you look at issues of AI — humanlike artificial intelligence — it ends up relating to problems that have to do with human consciousness. That kind of stuff is very enticing; it does that classic sci-fi thing where by talking about one thing, you’re also talking about another thing. And I just find it attractive that sci-fi can include those big ideas.

ML: Was there a particular work that sparked you?

AG: There are so many, it would be hard to pick just one. I was maybe 11 when I saw 2001, which has a really brilliant depiction of an AI. And there’s Alien, which as another fantastic depiction. And Blade Runner. It goes on and on... In terms of books, there’s a stack of them, but there was this one by Murray Shanahan, Embodiment and the Inner Life: Cognition and Consciousness in the Space of Possible Minds. It was while reading that that I got the specific idea for this movie. Maybe they all led to that one book.

ML: The film gives a bit of a tweak to the Turing Test, which is famously thought to help determine if an AI is truly conscious. The inventor, Nathan, says that in his test, he wants to show Caleb that he’s talking to Ava the robot, and then see if he nevertheless still feels that she’s conscious. That seems to place the measure in the realm of feeling instead of the realm of perception.

AM: Well, yeah. It’s partly a comment on the Turing Test. I think that popular culture often slightly misinterprets science, though in a reasonable way. In the case of the Turing Test, I think it has become current to say that it’s a test for sentience. But the Turing Test is primarily to see if you can pass the Turing Test. It’s a test of facility with language; you could theoretically pass it without being sentient is what I’m saying. Though I do think it’s a brilliant test, and incredibly difficult to pass, as evidenced by the fact that it’s never been passed. I just felt that this film had to go to the next step: Ava had to not just be able to convince you that she’s human. She also actually had to be humanlike.

ML: A lot of AI films get into the religious element, the question of whether the maker has the authority to destroy his creation. One of the things that struck me here was Nathan’s seeming eagerness to abandon that authority. He places himself on an evolutionary continuum, and talks about how AIs will replace us.

AG: There’s a religious argument to those AI films, the claim that man should not do God’s work — which is to create new life forms — because if he does, he’ll get his fingers burned. My film is, as you said, evolutionary in its view, but it’s not actually making a religious point. It has to do with the way we conceptualize AI. We’re threatened by AIs, scared of them. They’re presented as malevolent and frightening, and it’s a view that’s represented in the news these days. You have people like Elon Musk and Stephen Hawking saying that we have to be very careful with AIs, because they could destroy us and supercede us.

In some respects, I was trying to address that anxiety, saying that we don’t have to see AIs as rival creatures that exist on a parallel track with us, racing against us and leaving us behind. Instead, see them as being on the same track — as a continuation of us. The relationship between us and them is analogous, roughly, to the relationship between parents and children. Parents create new consciousnesses routinely; it’s the most normal thing in the world. If you choose to see the AI as more analogous to the child, the rivalry thing starts to fall away. Because what you want for your child is to have a good life and live longer than you did. Instead of being problematic, these things actually become desirable.

ML: I enjoyed the presentation of tech god Nathan. Watching him, I thought of the line that people stop developing at the point when they get famous. Nathan got famous for writing his killer program at 13, and when we meet him, he’s sitting around and drinking, working on his body, and making dance parties for himself, among other things. Was that idea at work here?

AG: I wish it had been, because it’s true, the way people get arrested at that point. People become famous in their late teens, and they stay stuck in a kind of adolescence forever and ever. But if I used that notion, it was unconscious. On a conscious level, Nathan was composed from various elements. Some of it was Kurtz — a guy who has spent too much time up river, and who doesn’t have enough factors around him to modify and limit his behavior. Some of it was someone isolated by his intelligence. Several times, I’ve noticed very smart people start to separate from a conversation and just go into their own heads.

But also, quite significantly, he’s supposed to be a representation, in some way, of big tech companies like Google and Apple. I’m not talking about specific individuals like Mark Zuckerberg or Steve Jobs. It’s more specific as a tone, the way these tech companies present themselves as being your friend, when in fact, they’re not your friend — they’re massive, massive businesses. They present themselves as your hipster buddy who is notionally unthreatening: “Let’s go hang out at the beach!” But they’re your hipster buddy who is also going through your address book and writing down all the names you keep in there and checking everyone you ever phoned and taking dollar bills out of your wallet every now and then.

ML: Most of the film takes place at Nathan’s house, and so the setting becomes hugely important. I was taken with the sort of “comfortable, cozy concrete bunker” aesthetic you managed. Could you talk about creating that?

AG: The starting point was that we were a low-budget film. Obviously, $15 million is a huge amount of money, but in film terms, it really isn’t a huge amount of money. And a big chunk of that went for the visual effects to create Ava. We knew that audiences are savvy, that they’ve seen $200 million films, and you can’t get away with being cheap and cheerful. So we had to create the house of someone who is staggeringly wealthy, and we had to do it on a budget. The typical solution for that when you’re making an independent movie is, you have to find it somewhere. We had to find a section of a property where we could film, and then pull the aesthetic and spread it out in a way that was sympathetic to the themes of the film. We didn’t want a cold, antiseptic, hospital sort of sci-fi. Nathan is interested in art; he’s sort of semi-cultured. So we wanted something warmer and more diffused and gentle. It began with just searches online, looking at photos of many, many houses and locations. Eventually we found a place in Norway that had the right landscape and the right building.

A bright young man at a fancy tech company (Domhnall Gleeson) gets picked to visit the company’s founder (Oscar Isaac) in his country home, er, homey concrete fortress. There, he is introduced to Ava (Alicia Vikander), a sweet and pretty robot who might just be the world’s first Artificial Intelligence. Our hero’s mission: talk to her and find out if she’s the real deal. Ava is a wonder: unlike <em>Her</em>'s Samantha, whose lack of a body made for a fundamental disconnect with her beloved, <em>this</em> lady AI is not only embodied but also anatomically correct, a feature which may complicate our assessor's task. But it's Isaac — buffed, bald, and bearded — is the film’s dramatic center as Nathan, a man betrayed by his own genius. He struts, he speechifies, he schemes, he scolds, all friendly menace and sly frankness. (He’s really smart, and certainly self-conscious, but is he entirely human?) Writer-director Alex Garland (writer of <em>28 Days Later</em>) makes excellent use of his spooky, locked-down setting; ups the tension with sure, slow hand; and delivers a satisfying, unsettling ending.

Artificial intelligence — the notion of a sentient machine — has had a bit of a run at the movies of late, with some entries (Her) more successful than others (Chappie). I’m putting Ex Machina on the success side of the ledger. It reminded me of Gattaca in its sleek, moody presentation and its close observation of humans struggling to navigate the murky moral waters in which they find themselves.

Matthew Lickona: Why make an AI movie?

Alex Garland: I love science fiction. If you look at issues of AI — humanlike artificial intelligence — it ends up relating to problems that have to do with human consciousness. That kind of stuff is very enticing; it does that classic sci-fi thing where by talking about one thing, you’re also talking about another thing. And I just find it attractive that sci-fi can include those big ideas.

ML: Was there a particular work that sparked you?

AG: There are so many, it would be hard to pick just one. I was maybe 11 when I saw 2001, which has a really brilliant depiction of an AI. And there’s Alien, which as another fantastic depiction. And Blade Runner. It goes on and on... In terms of books, there’s a stack of them, but there was this one by Murray Shanahan, Embodiment and the Inner Life: Cognition and Consciousness in the Space of Possible Minds. It was while reading that that I got the specific idea for this movie. Maybe they all led to that one book.

ML: The film gives a bit of a tweak to the Turing Test, which is famously thought to help determine if an AI is truly conscious. The inventor, Nathan, says that in his test, he wants to show Caleb that he’s talking to Ava the robot, and then see if he nevertheless still feels that she’s conscious. That seems to place the measure in the realm of feeling instead of the realm of perception.

AM: Well, yeah. It’s partly a comment on the Turing Test. I think that popular culture often slightly misinterprets science, though in a reasonable way. In the case of the Turing Test, I think it has become current to say that it’s a test for sentience. But the Turing Test is primarily to see if you can pass the Turing Test. It’s a test of facility with language; you could theoretically pass it without being sentient is what I’m saying. Though I do think it’s a brilliant test, and incredibly difficult to pass, as evidenced by the fact that it’s never been passed. I just felt that this film had to go to the next step: Ava had to not just be able to convince you that she’s human. She also actually had to be humanlike.

ML: A lot of AI films get into the religious element, the question of whether the maker has the authority to destroy his creation. One of the things that struck me here was Nathan’s seeming eagerness to abandon that authority. He places himself on an evolutionary continuum, and talks about how AIs will replace us.

AG: There’s a religious argument to those AI films, the claim that man should not do God’s work — which is to create new life forms — because if he does, he’ll get his fingers burned. My film is, as you said, evolutionary in its view, but it’s not actually making a religious point. It has to do with the way we conceptualize AI. We’re threatened by AIs, scared of them. They’re presented as malevolent and frightening, and it’s a view that’s represented in the news these days. You have people like Elon Musk and Stephen Hawking saying that we have to be very careful with AIs, because they could destroy us and supercede us.

In some respects, I was trying to address that anxiety, saying that we don’t have to see AIs as rival creatures that exist on a parallel track with us, racing against us and leaving us behind. Instead, see them as being on the same track — as a continuation of us. The relationship between us and them is analogous, roughly, to the relationship between parents and children. Parents create new consciousnesses routinely; it’s the most normal thing in the world. If you choose to see the AI as more analogous to the child, the rivalry thing starts to fall away. Because what you want for your child is to have a good life and live longer than you did. Instead of being problematic, these things actually become desirable.

ML: I enjoyed the presentation of tech god Nathan. Watching him, I thought of the line that people stop developing at the point when they get famous. Nathan got famous for writing his killer program at 13, and when we meet him, he’s sitting around and drinking, working on his body, and making dance parties for himself, among other things. Was that idea at work here?

AG: I wish it had been, because it’s true, the way people get arrested at that point. People become famous in their late teens, and they stay stuck in a kind of adolescence forever and ever. But if I used that notion, it was unconscious. On a conscious level, Nathan was composed from various elements. Some of it was Kurtz — a guy who has spent too much time up river, and who doesn’t have enough factors around him to modify and limit his behavior. Some of it was someone isolated by his intelligence. Several times, I’ve noticed very smart people start to separate from a conversation and just go into their own heads.

But also, quite significantly, he’s supposed to be a representation, in some way, of big tech companies like Google and Apple. I’m not talking about specific individuals like Mark Zuckerberg or Steve Jobs. It’s more specific as a tone, the way these tech companies present themselves as being your friend, when in fact, they’re not your friend — they’re massive, massive businesses. They present themselves as your hipster buddy who is notionally unthreatening: “Let’s go hang out at the beach!” But they’re your hipster buddy who is also going through your address book and writing down all the names you keep in there and checking everyone you ever phoned and taking dollar bills out of your wallet every now and then.

ML: Most of the film takes place at Nathan’s house, and so the setting becomes hugely important. I was taken with the sort of “comfortable, cozy concrete bunker” aesthetic you managed. Could you talk about creating that?

AG: The starting point was that we were a low-budget film. Obviously, $15 million is a huge amount of money, but in film terms, it really isn’t a huge amount of money. And a big chunk of that went for the visual effects to create Ava. We knew that audiences are savvy, that they’ve seen $200 million films, and you can’t get away with being cheap and cheerful. So we had to create the house of someone who is staggeringly wealthy, and we had to do it on a budget. The typical solution for that when you’re making an independent movie is, you have to find it somewhere. We had to find a section of a property where we could film, and then pull the aesthetic and spread it out in a way that was sympathetic to the themes of the film. We didn’t want a cold, antiseptic, hospital sort of sci-fi. Nathan is interested in art; he’s sort of semi-cultured. So we wanted something warmer and more diffused and gentle. It began with just searches online, looking at photos of many, many houses and locations. Eventually we found a place in Norway that had the right landscape and the right building.

Comments